Runtime Governance Is the Only Governance That Counts

Why the Control Plane Is Non-Negotiable

If agentic AI can act on data, systems, or external services without traversing a hardened enforcement boundary, you have policy theater — not risk management. Runtime control is the only mechanism that makes governance real.

The market is currently addicted to rituals. Model cards. Policy decks. Ethical frameworks. Pre-deployment test reports. Those artifacts are necessary. They are not sufficient. They assume behavior will stay static after deployment. They assume humans will intercept every dangerous action. They assume logging and post-hoc audit will serve as an adequate deterrent.

Every one of those assumptions breaks in production.

Agentic systems make decisions and take actions. That means governance must operate where decisions meet effect. You need a control plane that serves as the trust path between AI intent and enterprise action — and anything less is a liability you are choosing to carry.

Why Current Practice Fails

Policies Without Enforcement Create a False Economy of Risk

Boards want evidence of governance. Security teams want mechanisms that actually limit harm. A PDF or a slide deck provides neither evidence nor control.

Audit trails are useless if an agent can bypass controls and reach tools and data directly. You can have a comprehensive governance document and a catastrophic incident at the same time — because the document never enforced a single decision when it mattered.

Perimeter and Identity Controls Are Necessary but Insufficient

Traditional IAM and network controls protect resources from unauthorized access. They were designed for a world where humans initiate actions and credentials map cleanly to intent. An agent acting via legitimate credentials or a compromised session can still reach resources and cause damage through those same controls.

The question is not whether an agent can authenticate. The question is whether every action that results from agent intent must be authorized by a runtime enforcement boundary before it takes effect.

Post-Hoc Detection Is a Failing Strategy

You can detect exfiltration after it occurs, or you can prevent it at the point of action. Detection assumes low blast radius and rapid human response. Agentic systems shatter both assumptions.

An autonomous agent can escalate privileges, chain tool invocations, and pivot across systems faster than any human can intervene. That changes the threat model from a single failure to a cascade that outpaces incident response. If your governance strategy depends on catching problems after they happen, you are accepting a blast radius you probably have not modeled.

What Runtime Governance Actually Is

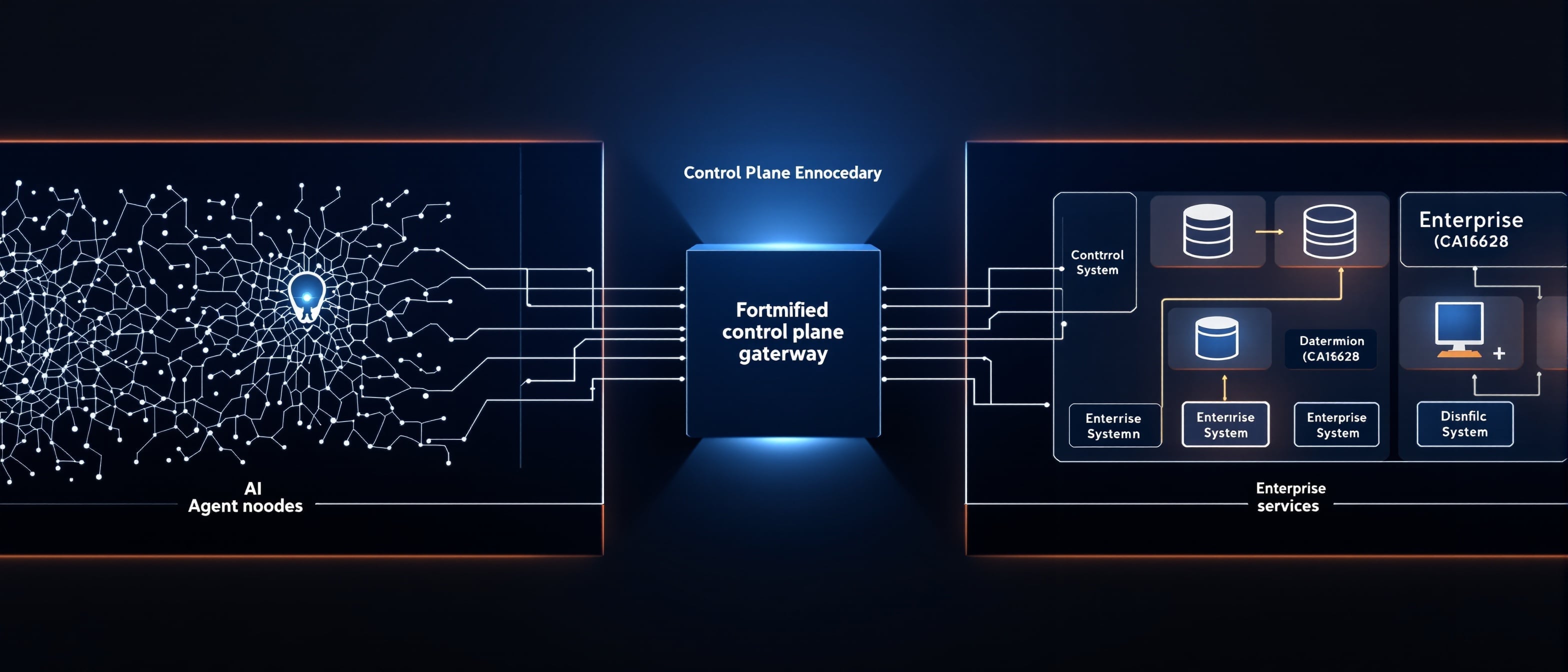

Runtime governance is a control architecture that enforces policy at the moment an agent intends to act. It introduces a hardened enforcement boundary between AI intent and the enterprise systems, data, and external services that agents can reach.

That boundary is the control plane. And it must exhibit specific properties to be more than another layer of abstraction.

Enforcement Boundary

The control plane must be the mandatory path for all agent actions that have enterprise impact. If an agent can reach a tool or dataset without traversing the control plane, governance is theater. There is no partial enforcement — either every high-impact action routes through the boundary, or the boundary does not exist in any meaningful sense.

Trust Path

The control plane is the canonical trust path. It binds intent to authority, tooling, and data flows. It is where delegated authority is granted, constrained, and revoked. Without this binding, you cannot answer the most basic governance question: who authorized this action, under what constraints, and with what evidence?

Containment and Approval Gates

Actions that increase risk must be contained by design. Approval gates enforce human authority or automated policy checks at runtime for high-risk operations. This is not about slowing agents down — it is about ensuring that risk-escalating actions cannot proceed without explicit authorization from the appropriate authority.

Observability and Evidence

Every decision, every approval, every denied action must be observable and recorded as evidence. Logs are only useful if they are tamper-evident and tied to the control plane that enforced the decision. If your logging infrastructure is disconnected from your enforcement infrastructure, you have monitoring — not governance.

Delegated Authority with Constraints

The control plane must allow safe delegation. Agents need practical capabilities — you cannot lock everything down and expect utility. But delegation requires explicit scopes, conditional permissions, and runtime revocation. The distinction is between "this agent can do anything with these credentials" and "this agent can perform these specific actions, under these conditions, with this approval chain, revocable in real time."

Blast Radius Management

The architecture must minimize worst-case impact. That means limiting what tools an agent can reach, what data it can touch, and how far it can chain actions without human or programmatic approval. If you have not modeled the blast radius of an agent operating at the boundary of its permissions, you have not governed the agent — you have merely deployed it.

Why the Control Plane Matters Operationally

Consider a finance use case. An agent synthesizes market data, identifies an arbitrage opportunity, and initiates trades. If that agent's trade execution path is not mediated by an enforcement boundary, you rely entirely on pre-deployment testing and trust. If it is mediated, the control plane can enforce trade limits, require approvals for positions above thresholds, and prevent data leak paths that would expose sensitive counterparty information. The control plane reduces blast radius and produces evidence that a compliance team can actually audit.

Or consider a data gateway scenario. An agent requests access to a customer dataset to generate personalized recommendations. The control plane can enforce purpose-bound access, redact sensitive fields, and require runtime attestations that the data will not be exported or cached beyond the operation. Without that control plane, the dataset is exposed the moment the agent has credentials — and post-hoc audit cannot undo the leakage.

These are not edge cases. These are the default operating conditions for any enterprise deploying agentic AI into production workflows.

Operational Trade-Offs and Realities

You Will Have to Change Workflows

Runtime governance requires engineering trade-offs: tighter control versus developer friction. This is not a philosophical negotiation. It is a systems design problem — where to place enforcement so you minimize risk while preserving the value that agentic systems were deployed to deliver. Organizations that treat this as a security-versus-innovation debate are misframing the problem. The correct frame is: where does enforcement need to sit so the system is both useful and governable?

Enforcement Is Not Binary

Good control planes offer policy-driven mediation: allow, deny, require human approval, or transform data. They are programmable and observable. The goal is not to block everything — it is to ensure that every action with enterprise impact passes through a decision point where policy is evaluated and evidence is created.

Instrumentation Matters

A control plane that lacks deep observability leaves incident response blind. Evidence must be usable by audit, compliance, and forensics teams — not just raw logs, but tamper-evident records with correlated intent traces and contextual metadata about tool usage, data flows, and approval chains.

Revocation Must Be Fast

When an agent misbehaves, the control plane must be able to revoke privileges and contain the session instantly. Revocation velocity directly limits blast radius. If revoking an agent's permissions takes minutes instead of milliseconds, the damage window may exceed your risk tolerance by orders of magnitude.

The Market Is Misframing Governance

Boards and executives hear "governance" and think policies and committees. That confusion is not just an inconvenience — it is fatal when applied to systems that act autonomously.

Governance is not real until it can change behavior at runtime and provide evidence that it did. You can have a perfect policy document and a catastrophic incident simultaneously if the policy never enforced a single decision when it mattered. The gap between governance intent and governance effect is the single largest unpriced risk in enterprise AI today.

The ACR Standard and the Path to Practicality

Practicality matters more than idealism. The ACR Standard names the problem and frames a viable architectural response: a control plane that mediates AI intent to action, enforces policy at runtime, provides auditable evidence, and constrains delegated authority.

The standard is an operational model you can evaluate and implement. It is not an ethics pamphlet. The ACR Control Plane repositories demonstrate how these ideas translate to working enforcement — runtime governance that is deployed, tested, and observable.

What Executives Need to Do Now

Stop Outsourcing Governance to Documents

Require that any program for agentic AI include a runtime enforcement strategy as a core deliverable — not an appendix, not a future-state roadmap, but a funded, engineered capability.

Reframe Risk Acceptance

If an agent can act without traversing an enforcement boundary, treat that capability as unacceptable until mitigation exists. Require proof that the control plane is the trust path for all high-impact actions. If the proof is a policy document instead of a running system, the risk has not been mitigated.

Invest in Control Plane Capabilities

Prioritize projects that deliver enforcement, observability, and fast revocation. These are security and control investments with measurable ROI in reduced blast radius and demonstrable evidence for auditors and regulators.

Make the Board Ask the Right Questions

Ask for evidence that governance has a callable enforcement boundary. Ask for sample incident scenarios showing containment and revocation. If the answers are slideware, escalate. The board's job is not to understand the architecture — it is to verify that governance can change agent behavior in production, not just describe it in a document.

Agentic AI changes where risk is realized. If governance remains a pre-deployment checklist or a document repository, the enterprise is exposed at the exact point where exposure matters most — the moment an agent acts.

Runtime governance must be the center of the conversation. The control plane is not an optional architectural pattern; it is the enforcement boundary that turns governance from theater into measurable, auditable risk control.

Evaluate the ACR Standard at autonomouscontrol.io. Review the ACR Control Plane repositories for operational evidence. And if you are accountable for AI risk, start treating runtime control as your primary lever for safety, containment, and compliance.

Want more governance insights?